Service Spotlight

By Tim de Vallée · Digital Boutique AI · March 2026

Every AI conversation you have with a cloud provider leaves a footprint. Your prompts, your data, your proprietary business logic — all of it passes through someone else’s infrastructure. For many businesses, that’s a risk they can no longer afford to take.

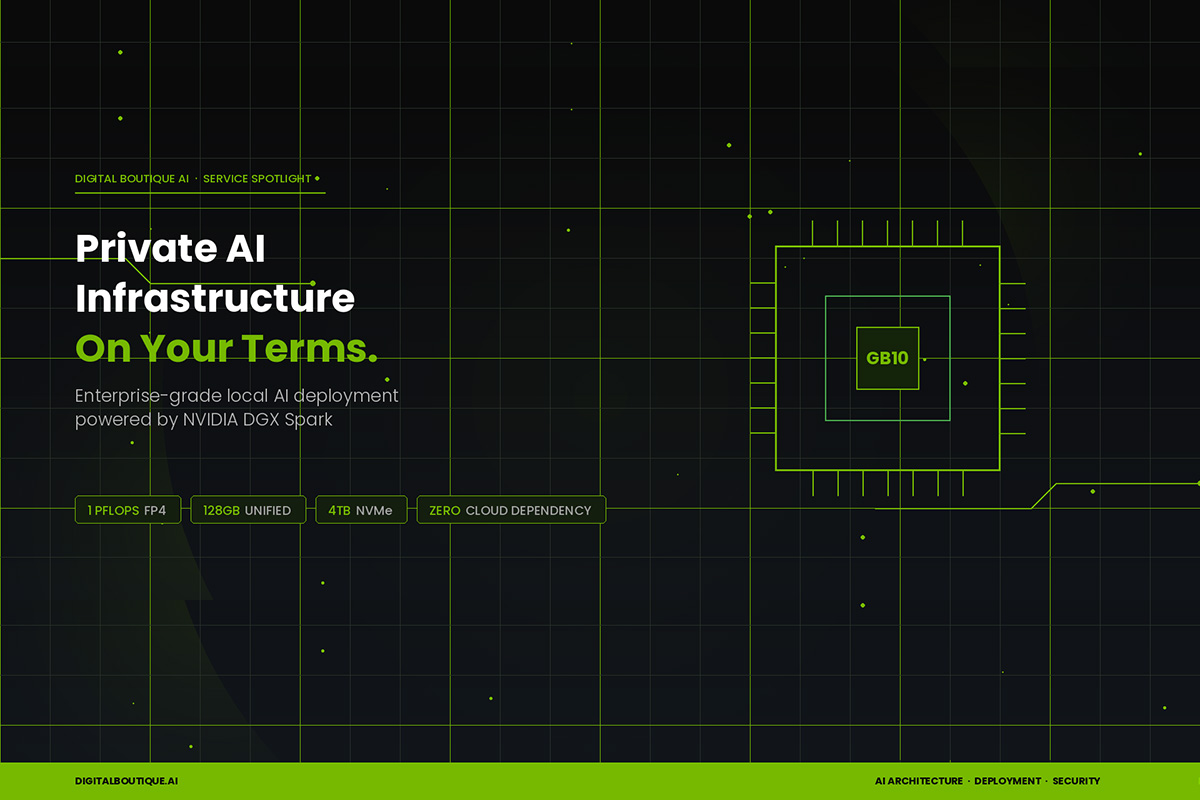

At Digital Boutique AI, we’re now offering private AI infrastructure deployment — fully local, enterprise-grade AI systems that run entirely on your premises. No cloud dependency. No data leaving your building. No third-party access to your intellectual property.

And the hardware that makes this practical? The NVIDIA DGX Spark.

The Hardware: NVIDIA DGX Spark

The DGX Spark is NVIDIA’s answer to a question the industry has been asking for years: how do you get data center-class AI performance without the data center?

Built around the GB10 Grace Blackwell superchip, the Spark delivers 1 petaflop of FP4 AI performance in a form factor smaller than a shoebox. That’s enough compute to run large language models with 70 billion+ parameters locally, handle real-time inference for customer-facing applications, and fine-tune models on your proprietary data — all without a single API call leaving your network.

Key Specifications

Processor: NVIDIA GB10 Grace Blackwell Superchip

AI Performance: 1 PFLOPS (FP4)

Memory: 128GB Coherent Unified Memory

Networking: ConnectX-7 Smart NIC

Storage: 4TB NVMe M.2 (Self-Encrypting)

Footprint: 150mm × 150mm × 50.5mm

Price: Starting at $4,699

Who This Is For

This isn’t about replacing cloud AI for everyone. It’s about giving businesses a choice — and for certain industries, that choice is becoming a requirement, not a preference.

Legal and professional services firms handling privileged client data that cannot be transmitted to third-party servers under any circumstances. Healthcare organizations managing patient information under HIPAA where every external API call introduces compliance risk. Financial services companies processing proprietary trading strategies, client portfolios, or risk models that represent core competitive advantage. And any business that recognizes their internal data, processes, and IP as assets worth protecting at the infrastructure level.

What We Deploy

Digital Boutique AI handles the full stack — from hardware procurement through production deployment. This is not a box-on-a-desk situation. It’s a managed AI infrastructure engagement built for real business use.

AI Model Deployment

We select, configure, and deploy the right open-source LLM for your use case — whether that’s Llama, Mistral, or a custom fine-tuned model trained on your domain data.

Custom AI Applications

Internal chatbots, document analysis tools, automated workflows, and intelligent assistants — all running locally against your private models with zero external dependencies.

Fine-Tuning & Training

With 128GB of unified memory and 4TB of encrypted storage, we fine-tune models on your proprietary data to deliver responses that understand your business, not just generic AI output.

Ongoing Management

Model updates, performance monitoring, security patching, and scaling guidance. We treat your AI infrastructure the way it should be treated — as critical business systems.

The Business Case

Beyond privacy and compliance, the economics are compelling. Businesses spending $2,000–$5,000+ per month on cloud AI API costs can potentially recoup the hardware investment within the first quarter. Once the infrastructure is in place, your per-inference cost drops to essentially zero — it’s your hardware, your electricity, your models.

And unlike cloud API pricing, which scales linearly with usage, local infrastructure scales with your ambition. More use cases, more users, more queries — same fixed cost.

Ready to Own Your AI Infrastructure?

Let’s discuss what private AI deployment looks like for your business.

Digital Boutique AI is a division of Digital Universe, based in Vista, California. We architect and deploy AI solutions for businesses that take their data seriously. digitalboutique.ai